How I got B2B SaaS comparison guides to #1 in 2026

Beat bigger competitors, steal their leads, and use AI to scale.

A few weeks ago, I updated 6 comparison guides for a B2B SaaS client (i.e. “best [use case] tools”). I published the new versions, requested reindexing in Google Search Console, and the new positions started showing up within 24 to 48 hours.

Usually when rankings move that fast, I expect Google to test the page for a few days and settle it lower. That didn’t happen. The gains stayed. And this wasn’t one lucky guide, it was consistent across all six guides.

This was a crowded category too. The competitors have stronger domains, more backlinks to their guides, sometimes 2 to 10x more overall backlinks than my client.

And yet we got to #1 on some of the main keywords with zero backlinks to the guide itself. Backlinks matter, but this was a good reminder that you can beat stronger sites if the page is genuinely better.

What the results looked like

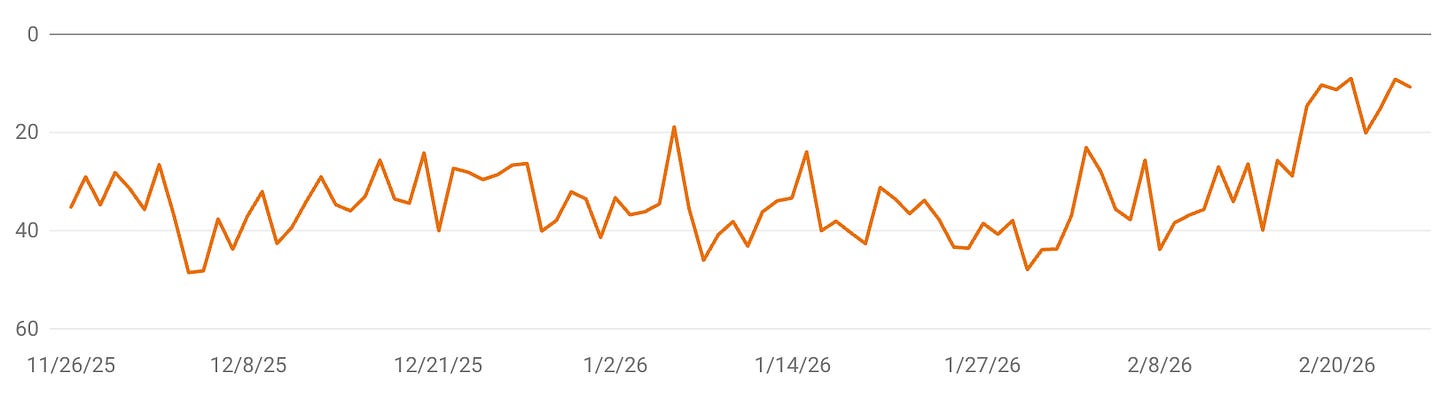

The chart below shows the movement. You can see a sharp jump in average position, roughly from page-four territory to the top few spots.

Traffic roughly tripled on those guides on average, and on some it was more than that.

What I changed

Most of the changes were common-sense improvements. Nothing earth-shattering. But they made the pages much more useful and much more credible.

I replaced generic pros and cons with review-backed comparisons. Instead of repeating product messaging, I focused on what users actually like, what they complain about, and where each tool is a bad fit.

I added real quotes from competitor reviews. That made the content feel less like a brand pretending to compare tools fairly and more like an actual buyer resource.

I included a full list of competitors, not just a convenient shortlist. Each one with its biggest strength, biggest weakness, and pricing summary, all in a table.

I made the bias explicit. There’s a section at the top acknowledging we’re biased but that we tried to be as fair as possible. I also included flaws of my own client’s product. I think this matters more than people want to admit.

I tightened the page structure for LLMs. Better intros that give the answer upfront, key takeaways, cleaner tables. That’s about it for the LLM side.

I improved page speed. The pages were below 50 before, now they’re around 60. I’d like to go higher but there’s a lot I can’t clean up myself. Even this improvement seems to have helped though.

What I think actually made the difference

The review angle changed the quality of the pages more than any SEO trick. Once the content stopped sounding like templated comparison copy and started sounding like a serious evaluation, the guides got better for readers fast.

Page speed is the other candidate. I’m not claiming performance alone got us to #1, but when a page is already competing well, marginal improvements add up.

Some things didn’t move the needle much. I already had schema markup. I added FAQs and cleaned up some schema details, but my opinion is that it’s not very important. I was already front-loading content with a summary table near the top too.

So my conclusion is simple. I was already doing a lot of things right. The extra usefulness, the extra credibility, and the performance lift were enough to push these guides over the line.

Comparison content and LLMs in 2026

When people talk about comparison content in 2026, they often want some new LLM-specific playbook. I don’t think that’s where the main gains are. Most of what worked here was still standard SEO work.

For on-page LLM optimization, the list is short. Give the answer early. Add key takeaways. Use clear tables. Make each section easy to parse. That’s it.

The bigger LLM challenge is off-page. If you want your product to show up in LLM answers, you need other guides and websites mentioning it. That’s a separate effort and one I’m still working on. But the on-page LLM work is a small part of the picture.

How I built all of this without writing a line

The content itself is now drafted almost entirely by Claude. I haven’t written a single line of these guides.

But can you really call it automated? I’ve spent about a year iterating on the system behind it: templates, styleguides, examples, instructions, tools. The process is mine. And everything goes through me.

A big reason the output is strong is that my Obsidian vault is full of competitor research. I research each competitor’s strengths, weaknesses, pricing, and reviews myself. The quotes in the guides aren’t hallucinated by an LLM. They’re in my vault because I found them and verified them.

So I can produce dozens of comparison pages now much faster than before. It still takes me a lot of time to fact-check everything, but that’s it. The results are the best I’ve ever had.

LLMs still don’t replace me at all. Without my expertise on positioning driving them, LLMs would generate slop.

The traffic actually converts

The more important question is whether this traffic has buying intent. Rankings are nice, but if comparison pages don’t move people deeper into the funnel, the win is limited.

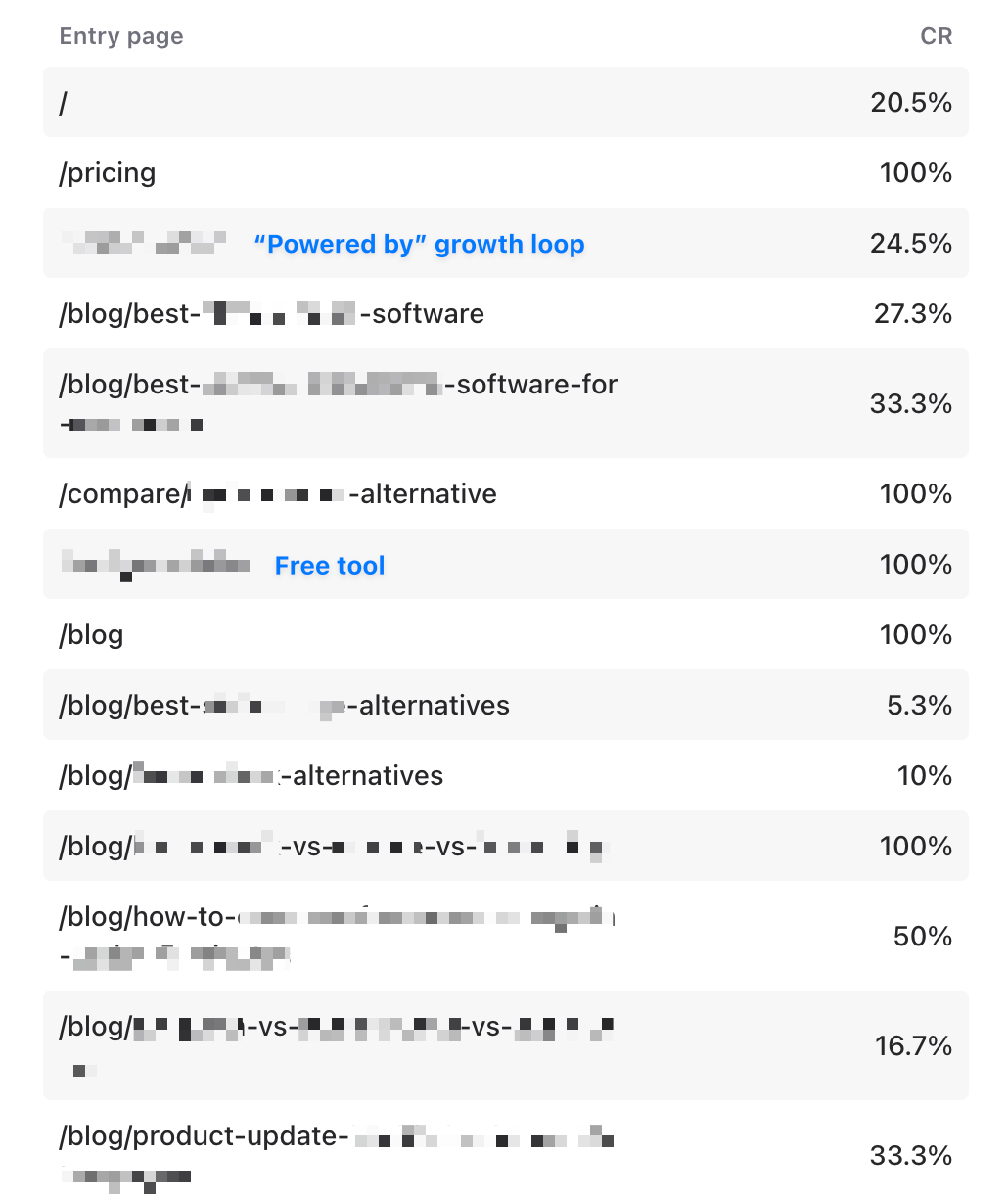

Apart from the homepage and a couple of other assets, most of the traffic moving toward pricing pages comes from comparison content.

I don’t control pricing or the signup flow, so I won’t pretend content owns all of the conversion. But these pages are clearly not bringing empty traffic.

What I’d take away from this

A lot of comparison content is still bad for the same reason it was bad a few years ago. It’s written like SEO content first and buyer content second.

What worked for me was making the guides more honest, more specific, and more useful. Not more bloated. Not more optimized for some imaginary algorithm.

Frankly there’s still a lot I could do (that’s my perfectionist side), but being #1 on a few keywords and #2 or #3 on others when you compete against unicorns is really good.

When did you start testing this?

I saw google recently started blacklisting such pages, in past few weeks. Wondering if your pages got affected by it?